A Startlingly Smart Book on Intelligence

Jared Taylor, American Renaissance, January 15, 2021

Richard J. Sternberg (ed.), The Nature of Human Intelligence, Cambridge University Press, 2018, 335 pp. $31.42 (softcover)

This collection of articles about current research in intelligence has a devastating unstated message: Our political and media rulers are ignoramuses. Experts take for granted ideas that would get you drummed out of the elite society if you admitted them publicly.

I was initially skeptical of this book. The editor, Prof. Robert Sternberg of Cornell, went through three standard textbooks on intelligence and invited the most cited experts to contribute articles. Prof. Sternberg is a squish on many issues, and I was afraid the “experts” would be squishes, too. I was wrong. There are weak sisters among the 20 authors and even a few I would call goofballs, but this collection includes undeceived researchers such as Thomas J. Bouchard, Richard Haier, Ian Deary, David Lubinski, and even pariahs such as Richard Lynn and Linda Gottfredson. No one could read this book with an open mind and not realize that the experts agree: Intelligence is something real and very important, it can be measured, it is powerfully influenced by genes and reflected in brain structures, men and women are different, and races and nations achieve at different levels partly for genetic reasons.

The author of the unsigned preface writes: “Intelligence, as measured by IQ tests, increased greatly in the 20th century. On the other hand, we are seeing in the 21st century more stupid behavior than one might have believed possible.” One of the reasons is that so many powerful people deny the truth. Needless to say, the mainstream media have ignored this book, even though Cambridge University Press is one of the most prestigious in the world.

Sex differences

From the early days of IQ testing, experts realized that males got higher scores than females on the math/spatial sub-tests that go into intelligence tests. Some people argued that there should therefore be separate sexed-based norms, but the great pioneer in IQ testing, Lewis Terman, decided to design and weight IQ tests so that males and females got the same average scores. This hides the average male advantage in math that appears in early adolescence.

For decades, American society has encouraged girls to study math and science, and this had an effect. Male dominance in math aptitude tests declined into the 1990s. However, the gap has since remained unchanged, and at the very highest levels — scores in the top .01 percent — there are four boys for every girl. The score that puts a student in the top 5 percent for females will be in only the top 10 percent for males. It shouldn’t be a surprise that boys are 20 to 60 percent more likely than girls to get perfect scores on the math-heavy AP exams in high school: physics, calculus, statistics.

Children know about these differences. At age five, both boys and girls say their own sex is smarter, but from age seven, both sexes are more likely to say boys and men are smarter. Interestingly, from about the same age, boys and girls know that girls get better grades. Classrooms seem to suit girls better than boys. As one author notes, a girl with an IQ of 100 tends to get As and Bs, while a boy with an IQ of 100 tends to get Bs and Cs. Girls are more likely to go on to college, but the boys in college are smarter, on average.

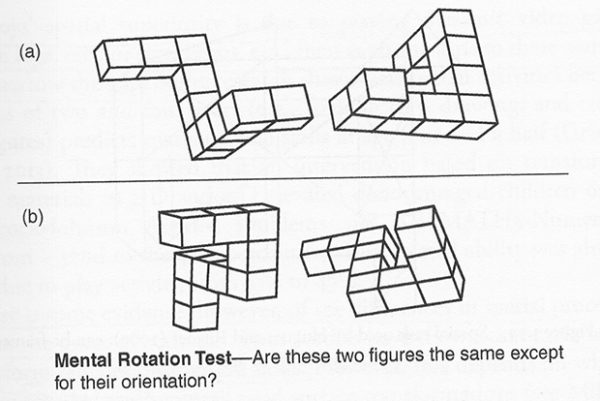

It has long been known that males are better than females at mental rotation. For years, people claimed this was because boys play with Lincoln logs and erector sets, but toys like those have almost disappeared in the era of video games, which both sexes play. The male advantage has not changed. (One author writes that intelligence can be measured by performance on video games; he claims correlations with IQ can be as high as 0.93.)

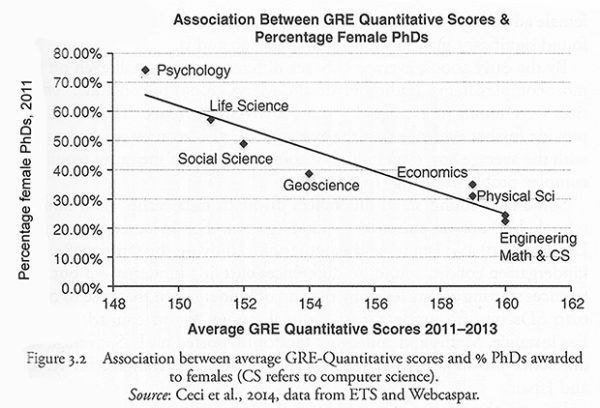

Women are now strongly encouraged to go into math and science, but these fields are still dominated by men. The graph below shows the percentages of PhD degrees granted to women in various fields according to their math-intensity. Women get more than 70 percent of the doctorates in psychology, but not even 25 percent in engineering, math, and computer science.

There have been many studies of the interests males and females show in different careers. From adolescence on, boys are much more likely than girls to say they want to be engineers or computer scientists; girls want to be teachers, biologists, or nurses. As a rule — and across every society ever studied — women are more interested in jobs working with people rather than things; men are the opposite.

Men are on average better informed in almost all areas; one exception is knowledge about health and nutrition, in which women are superior.

The biology of intelligence

Several of the papers reflect the excitement about using brain scans in intelligence research. “The revolution in neuroimaging technology is now central to biological studies of intelligence,” writes one author. The amounts of gray matter and white matter in various areas of the brain correlate with IQ, and it may soon be possible to measure IQ accurately from brain scans. The individual connections within each brain are unique and so stable that they can be called brain fingerprints. However, the patterns show a consistent sex difference, so brain-scan IQ tests would have to be devised separately for men and women. The brain-imaging patterns that correlate with intelligence are detectable at an early age, so there can be no doubt that IQ is based in biology and is largely determined by genes.

One author writes that although there is now no known way to raise IQ, it may become possible to do so through neuro-physiological intervention. Would society have an obligation to help low-IQ people and groups? It will eventually be possible to fertilize eggs in vitro, screen the embryo’s genes for good traits — including intelligence — and implant only the best. Will governments ban the rich from doing this and subsidize it for the poor? Progressives love heroic uplift programs for minorities and the poor, but they can’t even talk about anything that might work because they don’t believe in genes or IQ.

The inevitable decline

Several authors write about IQ and aging, noting that it is important to understand this because there are now so many old people. Some of the most valuable data come from Scotland. In 1921 and in 1936, virtually every Scottish 11-year-old took a standard IQ test. Scholars have tracked these Scots as they aged and found that smarter people live longer; one standard deviation in test scores was associated with a 21 percent difference in the chances of surviving to age 76. People with low IQs were more likely to die of accidents, suicide, murder, dementia, heart disease, and smoking-related cancers. Other cancers struck without regard to intelligence. The test records included teacher ratings on whether students were conscientious or belonged to a club. Both were independent predictors of survival into old age.

Coffee-drinking was also associated with longer life, but the effect disappeared after controlling for IQ. Scientists suspect that at least in tea-drinking Scotland, coffee may have been associated with the white-collar, better paid jobs the smart people took, so it is high IQ that led to both coffee-drinking and long life.

(Credit Image: © Dave Rushen/SOPA Images via ZUMA Wire)

People lose intelligence as they age. One of the great values of the Scottish study was to have started with a huge sample of people tested at age 11 who could be retested 50 or 60 years later to compare rates of decline. Differences in total brain volume, cortical thickness, and heath of white matter in the brain accounted for up to 20 percent of the variance, and other related brain differences are likely to be discovered. Genes account for at least part of this, with the best known being the APOE gene. Anyone with two copies of the e4 allele is more likely to decline quickly and to get dementia, though this accounts for only about 1 percent in individual differences in the rate of IQ loss.

Researchers tested these aging Scots for more than half a million genetic variants in all parts of their genomes. About one quarter of the rate of IQ decline seems to be associated with genetic factors, which means that as much as three quarters may have to do with how people live. It is clearly good to be active, physically fit, to have had a complex job requiring a lot of education, to be able to speak foreign languages, not to smoke, and to have avoided getting fat, type II diabetes, or high blood pressure. It is also good to have only a few minor physical anomalies, such as abnormal finger curvature, but the impact of all these things is small. Chance factors that apply only to the individual probably account for a large part of the rate of decline. “Brain games,” such as Luminosity, CogniFit, and Cogmed do not raise IQ and do not slow decline. Practice only makes people better at the games.

This huge Scottish study also confirmed basic facts about IQ: boys and girls had the same average score, but boys had a greater standard deviation. IQ at age eleven accurately predicted adult social status for children whether born rich or poor, and the adult heritability of intelligence was about 0.7. A paper-and-pencil test in childhood can “predict educational achievements and occupational status in midlife; and predict health, illness, and survival.”

IQ decline by middle age has mainly to do with abstract reasoning and figuring out solutions to new problems. Knowledge continues to accumulate. People in their 40s have a harder time than young people learning to use electronic gadgets, but they have more general knowledge.

The chapter by Thomas J. Bouchard recounts Charles Darwin’s remarks on reading Francis Galton’s book, Hereditary Genius, in which Galton argued that intelligence is heritable and has a strong genetic component: “I do not think I ever in all my life read anything more interesting and original. . . . I have always maintained that, excepting foods, men did not differ much in intellect, only in zeal and hard work.” Darwin was an environmentalist on the IQ question — but dropped that position in the face of the evidence.

Prof. Bouchard is one of several authors who slam Stephen Jay Gould for his absurd claims about intelligence, which continue to delight “progressives.” Professor Bouchard notes that there has been a relentless, obscurantist campaign by sociologists to promote the idea that intelligence can be dramatically raised or lowered by family environment. The hereditarian position is anathema because it means that people at the top in income and status “are genetically superior, at least with respect to IQ.”

Some of the authors look into the difference between IQ and expert performance. Intelligence tests cannot predict who will become a champion chess player or concert pianist. It takes a certain threshold IQ to get to the top in these fields — but not in most sports. A high IQ also helps beginners pick up a new activity, but it does not guarantee they will become experts. That takes different mental qualities: the will to practice for thousands of hours and a capacity to keep analyzing one’s own performance and keep improving. Amateur musicians often reach a plateau and get no better, even if they practice. This may be because after they reach a certain level, they don’t know how to get the most out of practice time. A good teacher helps.

Practice can have dramatic effects. The digit-span test is the ability to repeat random sequences of numbers after hearing them. One student was able to remember and repeat only seven digits, but after hundreds of hours practice, could repeat an astonishing 82 digits. He devised a complicated mnemonic method of remembering number sequences. All this work did not give him better recall for random sequences of letters nor did it raise his IQ.

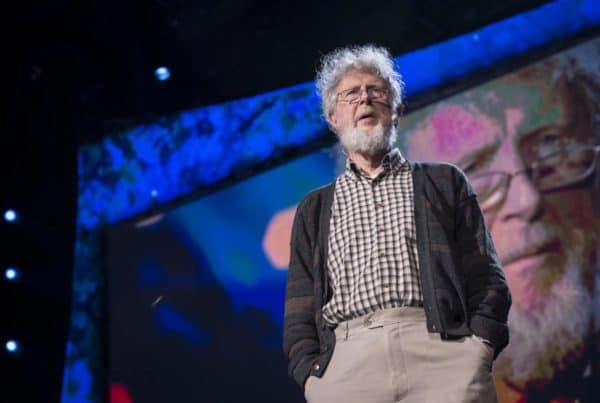

The chapter by James Flynn, who died last month, is about his famed “effect.” In developed countries, there has been a 30-point gain in IQ scores over the last 100 years. If this reflected a real gain in intelligence, it would mean the average European in 1900 had an IQ of 70 — which is impossible. Human genes have not changed dramatically in the last 100 years, so gains have to be caused by environment, and Flynn believed that people got better at taking tests. One hundred years ago, virtually everyone was a subsistence farmer or did undemanding factory or service jobs. Few people had more than six years of schooling and families were large, so that conversation of children dominated the household. To the extent people had any leisure, it was not intellectually demanding the way even video games are. This rise in IQ scores has stopped in developed countries.

James Flynn at TED in 2013. (Credit Image: James Duncan Davidson)

Flynn thought that because environment raised test scores, environment could bring the black IQ up to the level of whites. However, he always considered this a scientific question and not dogma. As he wrote in this book, “The enemies of truth tried to silence [Berkeley psychologist Arthur] Jensen. Science progresses not by labeling some ideas as too wicked to be true, but by debating their truth.”

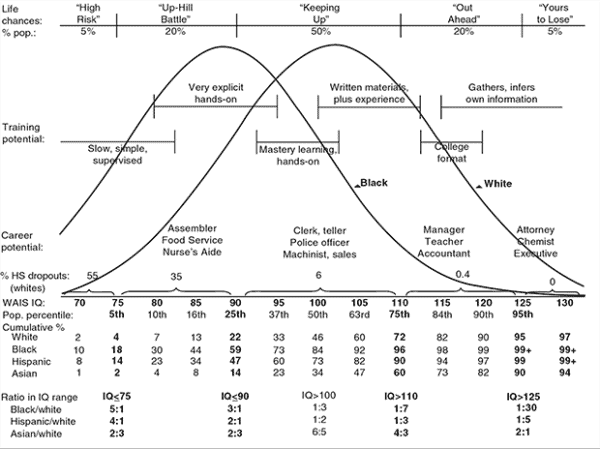

Linda Gottfredson’s chapter is worth the price of this book just for the following graph. The country — and the world — would be vastly better off if racial differences in IQ were universally known and accepted. It is folly of the worst kind to pretend that a black is just as likely as a white to have an IQ of 125 or greater when he is actually one thirtieth as likely. A Hispanic is one fifth as likely.

Prof. Gottfredson devotes much of her chapter to her well-known comparison of life to a long-running IQ test. Every step we take can be seen as an intelligence test, and if you fail in a really bad way, you can die. Prof. Gottfredson writes that civilization has been a strong evolutionary force for raising IQ:

Man-made hazards (such as falls from bridges, boats, and ladders; bites from domesticated animals) increased the relative risk of injury and death in the lower half of the intelligence distribution . . . . Ever more numerous and dangerous man-made hazards (weapons, poison, vehicles) can explain humans’ suddenly accelerated evolution of high intelligence. . . . All the evolution required to ratchet up our species’ intelligence was for these novel hazards to increase the relative risk of crippling and fatal injuries among individuals of below average ability.

Modern medical treatment plans have become so complex that dull people can’t follow them. Also, most doctors don’t understand that as people age — and as they need more medical care — their minds slow down. Doctors should not overestimate the ability of patients to follow instructions.

Prof. Gottfredson also notes that an accurately tested IQ score is a “maximal trait” that shows “what a person can do when circumstances are favorable.” Thinking is hard work, and most people cannot drive their brains at full speed for many hours a day.

Linda Gottfredson

There is a chapter by David Lubinski of Vanderbilt University, who argues that more IQ points help at any level, even past levels of 150 or 160: There is no “sign of diminished returns.” Prof. Lubinski bases his argument on the Study of Mathematically Precocious Youth, in which 13-year-old children were tested to find and track people in the top 0.01 percent — that’s one in 10,000. In that group, people with IQs of 160, say, did better than those with IQs of 155. Every IQ point helps. Success was measured by combining many standards: income, tenured professorships, patents granted, books and articles published, etc.

Prof. Lubinski regrets that education is badly skewed towards trying to bring up the dim people. Very smart people have special needs, but society ignores them.

The chapter by Richard Lynn is about the average IQs of nations, and reports a correlation between IQ and per capita GDP of 0.68. National IQ is also highly correlated with rule of law, political freedom, property rights, freedom of expression, absence of corruption, efficiency of bureaucracy, national happiness, level of trust, low crime rates, and life expectancy at birth. It is no wonder people from low-IQ countries want to emigrate. Prof. Lynn notes that one of the strongest correlations with national IQ is an unfortunate negative one of 0.85 with fertility. None of the high-IQ nations is maintaining its population while the low-IQ nations have high birth rates.

Goofballs

Alas, there is a minority of authors whose foolishness is so widely quoted that they made the cut for inclusion. One is the notorious Howard Gardner, who is still peddling “multiple intelligences” and reverently quoted Stephen Jay Gould on the evils of mental testing. He claims to have discovered “musical intelligence,” “intra-personal intelligence,” “pedagogical intelligence,” “existential intelligence,” and my favorite, the “bodily-kinesthetic intelligence” that makes you a good dancer or athlete. I don’t think anyone marvels at the intelligence of the winner of the Kentucky Derby. Mr. Gardner wants schools to set up “learning centers,” where academic material is pitched in ways that draw on all these “intelligences.” His chapter concludes ruefully: Multiple intelligences theory “has had remarkably little effect within the mainstream of scholarship in psychology and psychometric.”

Alan Kaufman of Yale wants intelligence testing to be completely subjective: “Assessments should be conducted by a carefully trained professional . . . who is a keen observer of behavior, steeped in cutting edge research and theory. . . . The scores are less important than the interpretation given to them. The tester means more than the test.” Why end objective testing? “The most important goal . . . [is] to reduce the ethnic differences that have plagued (and continue to plague) conventional IQ tests into the present day.”

Scott Kaufman of the University of Pennsylvania has a Theory of Personal Intelligence, which is “a call to be open to the incredible transformations people can undergo when they are allowed to engage in a domain that is aligned with their self-identity.” He wants to “put the whole person back into the intelligence picture,” [emphasis in the original] which is another way of saying he wants to stop talking about intelligence.

Diane Halpern of Claremont-McKenna College wants to teach critical thinking as a specific skill and claims that “high IQ scores are not a prerequisite for critical thinking.” Robert Sternberg, the editor, claims that when he added “creative and practical assessments” to tests, this “increased the content validity of the tests at the same time that they decreased ethnic group differences.” He also thinks “students can be taught to think more wisely, but the biggest challenge is teaching the teachers, who are not used to teaching in this way.”

These nostrum peddlers claim — correctly — that their ideas are popular with the public. The hereditarians don’t. Several of them pointed out that the reason policymakers pretend IQ doesn’t exist is because of group differences in average intelligence, which haven’t gone away despite decades of trying; if blacks and whites can’t be made to have the same IQ, IQ must be a myth. Linda Gottfredson wrote that the most important problem facing the field is “public reluctance to entertain human variation in g [general intelligence], and the misinformation and fallacies promoted to enforce it.” She adds that “the No Child Left Behind (NCLB) Act of 2001 [that required that students of all races achieve at the same level] exemplifies the waste and futility of g-denying social policy . . . .” Another writer scoffs at the “immensely popular” idea of personal learning styles, even though no one can show that tuning instruction to these alleged “styles” makes people learn any better.

The problem, of course, is media that refuse to report the truth because the truth might upset non-whites. If the United States were not “diverse,” no one would deny the nature of human intelligence, to quote the title of this book. No one worries that some white people are smarter than other white people. It is multiracialism that make it impossible to accept the truth.